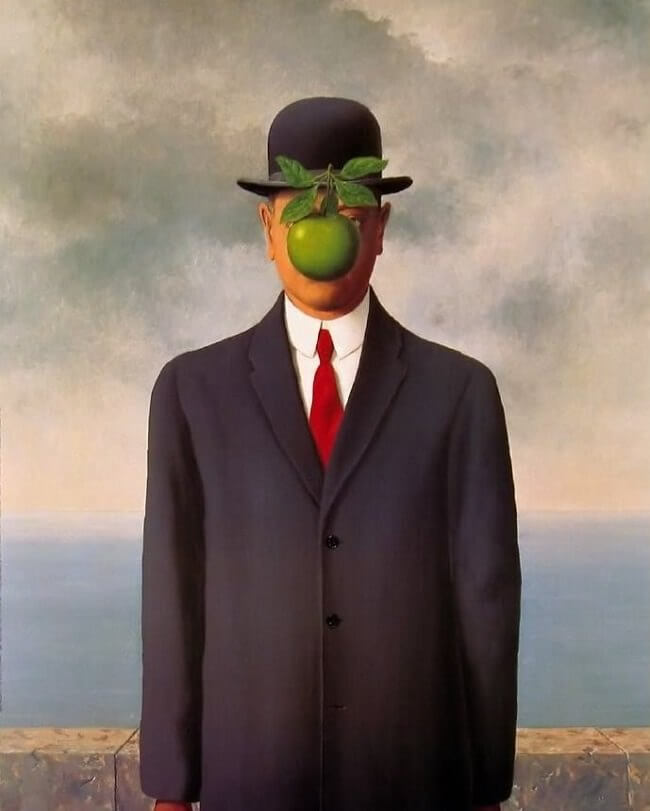

René Magritte , The Son of Man (1964)

ESSAY 3 — APRIL 2026

Identity is the foundation of rights, and how a society treats identity determines what kind of society it is.

“This book will argue that individual integrity is bigger than any individual. [...]. As the rights of the individual crumble, so too will, eventually, democratic society. “

In 2020, I argued that a direct line connects identity systems to political infrastructure. In the last chapter of the book I even went so far as arguing that different identity regimes would lead to different human futures, as our attitudes to identity would be a proxy for our relationship with AI. When we give the robots wallets, identity, or assigned consciousness, all of the above applies. This was rather radical at the time. Now, decidedly less so.

How a society treats identity is not an abstract philosophical question. It is a policy choice, made daily, in legislation, in procurement, in the design of systems.

“Philosophy professor Michael Sandel taught his Harvard class the following on moral dilemmas: “It is true that these questions have been debated for a very long time, but the very fact they have recurred and persisted, may suggest that although they are impossible in one sense, they are unavoidable in another, and the reason that they are unavoidable, the reason they are inescapable, is that we live some answer to these questions every day.”

Nations live their own answer to these questions. The question is whether they are living it deliberately. Per philosophy professor Nick Bostrom, “to the extent that ethics is a cognitive pursuit, a super intelligence could easily surpass humans in the quality of moral thinking”. What if humans were never the autonomous rational agents the liberal tradition imagined. We are already shaped by biology, culture, language, and institutions. If that is true, the question is not whether AI shapes who you are — everything shapes who you are — but whether AI shapes you better or worse than the alternatives. If autonomy was always partly illusory, what exactly are we losing? My answer to this is not a pristine self that never existed, but the capacity to keep becoming. That is what the renewable identity argument protects. And that is what democracy requires.

Identity is the gateway to rights: if claims (attestations) are verified (authenticated), this empowers somebody or something to act (authorisation).

In 2020, I argued that a direct line connects identity systems to political infrastructure. In the last chapter of the book I even went so far as arguing that different identity regimes would lead to different human futures, as our attitudes to identity would be a proxy for our relationship with AI. When we give the robots wallets, identity, or assigned consciousness, all of the above applies. This was rather radical at the time. Now, decidedly less so.

How a society treats identity is not an abstract philosophical question. It is a policy choice, made daily, in legislation, in procurement, in the design of systems.

Nations live their own answer to these questions. The question is whether they are living it deliberately. Per philosophy professor Nick Bostrom, “to the extent that ethics is a cognitive pursuit, a super intelligence could easily surpass humans in the quality of moral thinking”. What if humans were never the autonomous rational agents the liberal tradition imagined. We are already shaped by biology, culture, language, and institutions. If that is true, the question is not whether AI shapes who you are — everything shapes who you are — but whether AI shapes you better or worse than the alternatives. If autonomy was always partly illusory, what exactly are we losing? My answer to this is not a pristine self that never existed, but the capacity to keep becoming. That is what the renewable identity argument protects. And that is what democracy requires.

Identity is the gateway to rights: if claims (attestations) are verified (authenticated), this empowers somebody or something to act (authorisation).

“Claiming something with confidence does not make it true. In the First World War, the Germans disguised one of their ships as a British ship, the RMS Carmania, and sent it out to ambush British vessels. In a hilariously bad stroke of luck, the first ship it encountered was the real RMS Carmania, which promptly sank the German imposter ship”

Charles Edward Dixon (1923), Carmania battling "Carmania"

Three kinds of attestations need to be differentiated: inherent, assigned and accumulated claims. Inherent attributes cannot be altered and are permanent (i.e. date of birth, place of birth and fingerprints). Either a claim is true, or it is not. Assigned attestations describe relationship-based attributes. A government assigns citizenship; immigration can replace it. On verification, the old claim is severed. Accumulated attestations follow the shape of a life. Some build over time — the diplomas earned moving through school, the professional record assembled over a career. Others open and close: a smoking habit enters the health record, and one day, the last cigarette is put out.

In 2026 it is clear that proof of identity extends to proof of humanity. The inverse is also true.

“Things must have an identity to be accountable.“

As collaborative frameworks grow beyond human:human interactions to include agent:agent (i.e. inter-agent bargaining) and human:agent (cooperative AI) relations, identity expands in parallel. For example, for a model inherent claims include parameter count, training data cutoff, and base model lineage. These are permanent in the same sense as a fingerprint. They cannot be altered without producing a different model. For an agent built on top of a model, the inherent layer is the base model identity. Likewise, assigned attestations map to the permission and certification layer. Consider variable safety AI benchmarks, tool access, or governmental compliance. For agents, assigned attestations extend to scope of authority: what the agent is permitted to act on, on whose behalf, and under which oversight conditions. For agents operating with persistent memory or retrieval stores, the accumulated layer grows with task history. Here is the difference. A human cannot fine-tune away their past. A model can. Behavioural records built up in one deployment context do not automatically survive further training, and the attestation chain breaks in ways that have no equivalent in the human case.

Without a stable attestation infrastructure for AI systems, accountability is absent. You cannot hold accountable what you cannot identify.

“Important algorithms will carry IDs, not only dramatically increasing their usefulness but also serving as a point for feedback. Based on these IDs, algorithms can build reputations, and be subject to independent audits based on governance frameworks. For example, evaluation on if the model adequately covers the group of people it aims to address. Because preference models are almost always incomplete, algorithms should know what they do not know.”

Theoretically, this all makes sense, and in 2020 I expected digital identity to shift to the individual: to become self-sovereign. After all, identity changed before.

“As the structure of civilization evolves, so does the role and function humans play in that system. Methods of identification and categorisation advance in parallel to rising societal complexity. A reference point signalling who you are in the sea of almost eight billion people. Sometimes change emerges via a direct reaction to unfolding events, such as the Nansen passport in response to the Russian revolution. At other times change builds gradually, for example the progression from descriptions of large foreheads in the 20th century to biometric data in the 21st century. With the rise of the Internet, how we see ourselves and how our governments see us have stopped being the only identity inputs available. Gradually, subjective behaviour was codified and added to previously objective rights. And not just governments, but also companies granted themselves the licence to tell you who you are.”

In AI safety we measure capability and propensity. That is an instrumentalist frame. A rights-based frame is structurally different: certain things may not be asked of a system, independent of what it could do. How the public understands digital minds will determine whether AI systems hold rights, whether agents can vote, and whether AI is accountable. If agents can vote and outnumber humans, what happens to democratic systems? A right-based approach changes politics, economics, and war. It opens the door to AI-led campaigns and political parties advocating for AI welfare and rights. Similarly, visions of AGI abundance still need taxation: if public infrastructure is funded through fully autonomous agent-led companies, is 100% taxation moral? Lastly, if AI is believed to be conscious by the human majority, what does that mean for warfare and international humanitarian law?

What to watch in 2026 and beyond is how we trust and how we judge AI. There already are areas where humans trust AI more than themselves. Writing is one of them, as evidenced by the exponential AI content explosion. In the book, I highlighted research from the scholar César Hidalgo, comparing judgment of humans and machines in eighty scenarios.

“In a subway station, an officer sees a person carrying a suspicious package who matches the description of a known terrorist. The ...” (Human).”

“In a subway station, a computer vision system sees a person carrying a suspicious package who matches the description of a known terrorist. The ...” (Machine).”

The conclusion of the paper addressed the morality of markets: there are certain places machines do not belong. A decade later, machines are everywhere. Continuous and critical evaluation is required if we use digital logic for problems that require human logic, or the other way around. I argued that how society treats identity is a proxy for how it views AI. Whether we take a rights-based view or an instrumentalist based-view will show what we value, and what deserves to be protected. AI already defines us by negation. We now argue that humanity is everything AI cannot yet do. That used to be chess; now it is interactive planning. Our emotional relation with and moral understanding of AI is changing in real time.

That boundary — between where machines belong and where they do not — was never formally drawn. The three futures I outlined in 2020 are each, in part, a consequence of that failure to draw it: the digital “haves” and “have nots”, permanent connectivity, and the mirror world.

“The first future will be defined by the digital “haves” and “have nots”. In this future, online and offline inequality will continue being captured in identity. Take, for example, Afghanistan, which currently has the largest gender gap in ID coverage, with 94 percent of men owning IDs, compared to only 48 percent of women. Ironically, in a world of hypervisibility, invisibility is still a problem for millions. The World Bank reports that globally close to 40 percent of the population aged over 15 in low-income countries do not have an ID. If you are not counted, you do not count. The recent refugee crisis in Europe has further brought home just how important portable, verifiable identity has become in a globalised world. [...] The World Economic Forum advises that if we act wisely today, digital identities can help transform the future for billions of individuals, all over the world, enabling access to new economic, political and social opportunities, while enjoying digital safety, privacy and other human rights. This is the choice between a future that is either more, or less, equal.”

In 2026, the gap has not closed. It has bifurcated: the invisible remain invisible, while the visible are more legible than ever.

“The second possible future is one of permanent connectivity. In a world with permanent connectivity, what happens if you do not want to share information? As a British man who told facial recognition trial administrators to leave him alone (“piss off”) found out first hand, insisting on complete privacy would mean a life spent completely offline, cut off from all communication (messaging), information (online content), outside space (cameras), and payment functionalities (digitally logged). Even encryption alone would not suffice, as quantum computing will make it possible to break most encryption retroactively. If you decided you wanted to go down this route, you would have to become a hermit, opting out of all interaction with the rest of the world. In this future, we will never “turn off”. [...] In this future, there will be no part of human life, no layer of experience, that is not going to be used for economic value. Ultimately, this will redefine what it means to be human.”

The hermit scenario turned out to be optimistic. Opting out now means opting out of employment, healthcare, and civic participation, not just communication.

“The third future we will consider is called the “mirror world”, a complete representation of the world in digital form. Every person, action, tree, building, wind gust, sun ray, check-out counter and loaf of bread will be captured as a digital twin."

You would be forgiven for thinking the mirror world is the most far-fetched. In reality, all you need is a camera.

“Some aspects of the mirror world are pretty incredible. The ability to search spaces (images), hyperlinking objects into networks of the physical world, just as the web hyperlinked words. If things and places become machine readable, this opens up a whole new family of algorithms. In this 4D world, it will be possible to scroll forwards and backwards in time. To interact in the mirror world, the centre of interaction will move from the smartphone keyboard to the camera. Yet if we arrive in the mirror world and the business model on offer is still attention, exploitation will reach entirely new heights.”

Transhumanists, individuals fusing themselves with technology, take the mirror world seriously.

“By inserting chip implants in their body, for example, the individual quite literally becomes the sensor, absorbing its capabilities. This will range from the benign, such as swiping your arm to check in at work in the morning, to acquiring additional processing power and memory. Likewise, this will make deeper relations between humans and machines possible, like enhancing technology with human characteristics, uploading information into bots and robots, and, at later stages, brain-to-computer interfaces.”

“Musk’s long-term rationale means hedging against AI wiping the human race out by creating symbiosis between humans and machines.”

This is one of my favourite anecdotes on technological utopianism’s long history of promising connection and delivering something else.

"Back in 1858, when the transatlantic telegraph cable was completed, some heralded world peace. There were fireworks on the day of the inauguration. More connectedness would lead to better understanding of each other. “

It always makes me laugh. Not because it is true. But because it is so, so wrong.

The assumption that more connection produces more understanding has been wrong before. It is wrong again now. The difference is that this time, the infrastructure of connection is also the infrastructure of control. Full totalitarianism is not required to create a self-fulfilling prophecy of deterministic self-correction.

Orwell's warning describes authoritarian capture. Huxley's Brave New World describes the liberal version: drowning in information, indifferent to facts. “Just as competition between liberal democratic, fascist and communist systems defined the 20th century,” I wrote, “identity treatment will define the 21st.” Authoritarian systems that subordinate the individual to the state and democratic systems that derive legitimacy from individual rights. In each, identity treatment shapes the everyday person’s political voice.

“What few people realise is that George Orwell's famous novel is actually widely misunderstood. The true warning was not a society where you are watched at all times, but one where you could be. The main character narrates: “You had to live – did live, from habit that became instinct – in the assumption that every sound you made was overheard, and, except in darkness, every moment scrutinised.”

Orwell's warning describes authoritarian capture. Huxley's Brave New World describes the liberal version: drowning in information, indifferent to facts. “Just as competition between liberal democratic, fascist and communist systems defined the 20th century,” I wrote, “identity treatment will define the 21st.” Authoritarian systems that subordinate the individual to the state and democratic systems that derive legitimacy from individual rights. In each, identity treatment shapes the everyday person’s political voice.

Identity Reboot was written to make three arguments. One, privacy erosion erodes human autonomy (independence to decide), and shrinking autonomy and increased AI agency shrinks human agency (power to do). Two, identity is the foundation of rights, and the prerequisite for AI accountability. Three, identity treatment determines political systems.

If we cannot make up our mind, or change our mind, democracy is already dead. But the capacity to ask that question, and to insist on an answer, is itself an act of constitutive autonomy.

The allegory of the cave, or Plato's cave, is a dialogue the Greek philosopher Plato attributed to Socrates, describing a group of people chained in a cave, watching the shadows of the outside world on the blank wall in front of them. To the prisoners, the shadow world is reality. LLMs are trained in Plato's cave, and do not leave. AI world models first simulate the world, and then enter the physical world to test this simulation. Plato's prisoners cannot conceive of a world beyond the shadows, and Socrates believed that these prisoners would protect themselves against – and kill anyone – who tried to drag them out of the cave. Let humans not be defined by what AI cannot yet do, but by what only humans choose to become.

“Democracy allows us to institutionalise our voices, creating a world in our own image. But it stops working when we lose our voice. Identity is a fundamental asset of interaction: it is the very first step towards humanity in our data-driven society.”

Identity Reboot was written to make three arguments. One, privacy erosion erodes human autonomy (independence to decide), and shrinking autonomy and increased AI agency shrinks human agency (power to do). Two, identity is the foundation of rights, and the prerequisite for AI accountability. Three, identity treatment determines political systems.

If we cannot make up our mind, or change our mind, democracy is already dead. But the capacity to ask that question, and to insist on an answer, is itself an act of constitutive autonomy.

The allegory of the cave, or Plato's cave, is a dialogue the Greek philosopher Plato attributed to Socrates, describing a group of people chained in a cave, watching the shadows of the outside world on the blank wall in front of them. To the prisoners, the shadow world is reality. LLMs are trained in Plato's cave, and do not leave. AI world models first simulate the world, and then enter the physical world to test this simulation. Plato's prisoners cannot conceive of a world beyond the shadows, and Socrates believed that these prisoners would protect themselves against – and kill anyone – who tried to drag them out of the cave. Let humans not be defined by what AI cannot yet do, but by what only humans choose to become.

It always makes me laugh. Not because it is true. But because it is so, so wrong.

The assumption that more connection produces more understanding has been wrong before. It is wrong again now. The difference is that this time, the infrastructure of connection is also the infrastructure of control. Full totalitarianism is not required to create a self-fulfilling prophecy of deterministic self-correction.

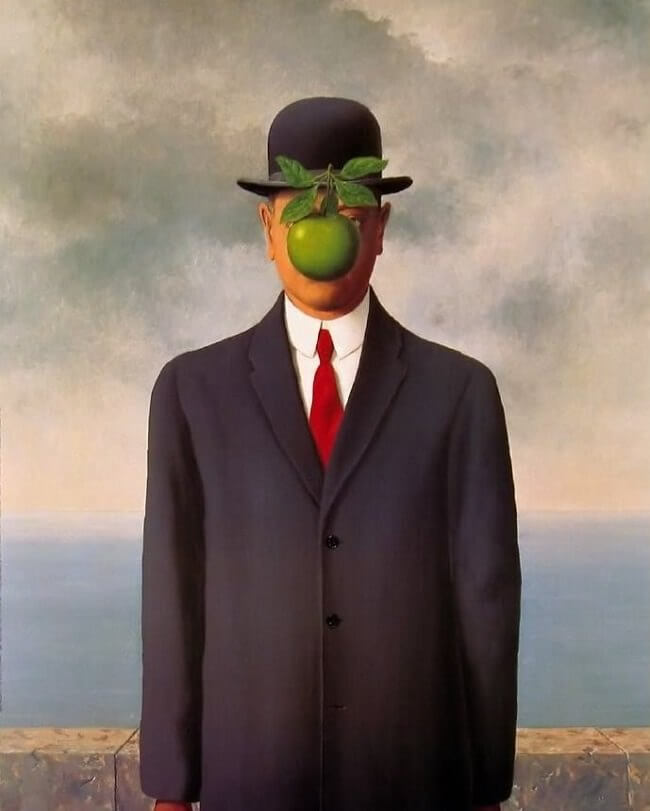

Caspar David Friedrich, Wanderer above the Sea of Fog (1818)

ESSAY 3 — APRIL 2026

Identity is the foundation of rights, and how a society treats identity determines what kind of society it is.

“This book will argue that individual integrity is bigger than any individual. [...]. As the rights of the individual crumble, so too will, eventually, democratic society. “

In 2020, I argued that a direct line connects identity systems to political infrastructure. In the last chapter of the book I even went so far as arguing that different identity regimes would lead to different human futures, as our attitudes to identity would be a proxy for our relationship with AI. When we give the robots wallets, identity, or assigned consciousness, all of the above applies. This was rather radical at the time. Now, decidedly less so.

How a society treats identity is not an abstract philosophical question. It is a policy choice, made daily, in legislation, in procurement, in the design of systems.

“Philosophy professor Michael Sandel taught his Harvard class the following on moral dilemmas: “It is true that these questions have been debated for a very long time, but the very fact they have recurred and persisted, may suggest that although they are impossible in one sense, they are unavoidable in another, and the reason that they are unavoidable, the reason they are inescapable, is that we live some answer to these questions every day.”

Nations live their own answer to these questions. The question is whether they are living it deliberately. Per philosophy professor Nick Bostrom, “to the extent that ethics is a cognitive pursuit, a super intelligence could easily surpass humans in the quality of moral thinking”. What if humans were never the autonomous rational agents the liberal tradition imagined. We are already shaped by biology, culture, language, and institutions. If that is true, the question is not whether AI shapes who you are — everything shapes who you are — but whether AI shapes you better or worse than the alternatives. If autonomy was always partly illusory, what exactly are we losing? My answer to this is not a pristine self that never existed, but the capacity to keep becoming. That is what the renewable identity argument protects. And that is what democracy requires.

Identity is the gateway to rights: if claims (attestations) are verified (authenticated), this empowers somebody or something to act (authorisation).

Nations live their own answer to these questions. The question is whether they are living it deliberately. Per philosophy professor Nick Bostrom, “to the extent that ethics is a cognitive pursuit, a super intelligence could easily surpass humans in the quality of moral thinking”. What if humans were never the autonomous rational agents the liberal tradition imagined. We are already shaped by biology, culture, language, and institutions. If that is true, the question is not whether AI shapes who you are — everything shapes who you are — but whether AI shapes you better or worse than the alternatives. If autonomy was always partly illusory, what exactly are we losing? My answer to this is not a pristine self that never existed, but the capacity to keep becoming. That is what the renewable identity argument protects. And that is what democracy requires.

Identity is the gateway to rights: if claims (attestations) are verified (authenticated), this empowers somebody or something to act (authorisation).

“Claiming something with confidence does not make it true. In the First World War, the Germans disguised one of their ships as a British ship, the RMS Carmania, and sent it out to ambush British vessels. In a hilariously bad stroke of luck, the first ship it encountered was the real RMS Carmania, which promptly sank the German imposter ship”

Charles Edward Dixon (1923), Carmania battling "Carmania"

Three kinds of attestations need to be differentiated: inherent, assigned and accumulated claims. Inherent attributes cannot be altered and are permanent (i.e. date of birth, place of birth and fingerprints). Either a claim is true, or it is not. Assigned attestations describe relationship-based attributes. A government assigns citizenship; immigration can replace it. On verification, the old claim is severed. Accumulated attestations follow the shape of a life. Some build over time — the diplomas earned moving through school, the professional record assembled over a career. Others open and close: a smoking habit enters the health record, and one day, the last cigarette is put out.

In 2026 it is clear that proof of identity extends to proof of humanity. The inverse is also true.

“Things must have an identity to be accountable.“

As collaborative frameworks grow beyond human:human interactions to include agent:agent (i.e. inter-agent bargaining) and human:agent (cooperative AI) relations, identity expands in parallel. For example, for a model inherent claims include parameter count, training data cutoff, and base model lineage. These are permanent in the same sense as a fingerprint. They cannot be altered without producing a different model. For an agent built on top of a model, the inherent layer is the base model identity. Likewise, assigned attestations map to the permission and certification layer. Consider variable safety AI benchmarks, tool access, or governmental compliance. For agents, assigned attestations extend to scope of authority: what the agent is permitted to act on, on whose behalf, and under which oversight conditions. For agents operating with persistent memory or retrieval stores, the accumulated layer grows with task history. Here is the difference. A human cannot fine-tune away their past. A model can. Behavioural records built up in one deployment context do not automatically survive further training, and the attestation chain breaks in ways that have no equivalent in the human case.

Without a stable attestation infrastructure for AI systems, accountability is absent. You cannot hold accountable what you cannot identify.

“Important algorithms will carry IDs, not only dramatically increasing their usefulness but also serving as a point for feedback. Based on these IDs, algorithms can build reputations, and be subject to independent audits based on governance frameworks. For example, evaluation on if the model adequately covers the group of people it aims to address. Because preference models are almost always incomplete, algorithms should know what they do not know.”

Theoretically, this all makes sense, and in 2020 I expected digital identity to shift to the individual: to become self-sovereign. After all, identity changed before.

“As the structure of civilization evolves, so does the role and function humans play in that system. Methods of identification and categorisation advance in parallel to rising societal complexity. A reference point signalling who you are in the sea of almost eight billion people. Sometimes change emerges via a direct reaction to unfolding events, such as the Nansen passport in response to the Russian revolution. At other times change builds gradually, for example the progression from descriptions of large foreheads in the 20th century to biometric data in the 21st century. With the rise of the Internet, how we see ourselves and how our governments see us have stopped being the only identity inputs available. Gradually, subjective behaviour was codified and added to previously objective rights. And not just governments, but also companies granted themselves the licence to tell you who you are.”

In AI safety we measure capability and propensity. That is an instrumentalist frame. A rights-based frame is structurally different: certain things may not be asked of a system, independent of what it could do. How the public understands digital minds will determine whether AI systems hold rights, whether agents can vote, and whether AI is accountable. If agents can vote and outnumber humans, what happens to democratic systems? A right-based approach changes politics, economics, and war. It opens the door to AI-led campaigns and political parties advocating for AI welfare and rights. Similarly, visions of AGI abundance still need taxation: if public infrastructure is funded through fully autonomous agent-led companies, is 100% taxation moral? Lastly, if AI is believed to be conscious by the human majority, what does that mean for warfare and international humanitarian law?

What to watch in 2026 and beyond is how we trust and how we judge AI. There already are areas where humans trust AI more than themselves. Writing is one of them, as evidenced by the exponential AI content explosion. In the book, I highlighted research from the scholar César Hidalgo, comparing judgment of humans and machines in eighty scenarios.

“In a subway station, an officer sees a person carrying a suspicious package who matches the description of a known terrorist. The ...” (Human).”

“In a subway station, a computer vision system sees a person carrying a suspicious package who matches the description of a known terrorist. The ...” (Machine).”

The conclusion of the paper addressed the morality of markets: there are certain places machines do not belong. A decade later, machines are everywhere. Continuous and critical evaluation is required if we use digital logic for problems that require human logic, or the other way around. I argued that how society treats identity is a proxy for how it views AI. Whether we take a rights-based view or an instrumentalist based-view will show what we value, and what deserves to be protected. AI already defines us by negation. We now argue that humanity is everything AI cannot yet do. That used to be chess; now it is interactive planning. Our emotional relation with and moral understanding of AI is changing in real time.

That boundary — between where machines belong and where they do not — was never formally drawn. The three futures I outlined in 2020 are each, in part, a consequence of that failure to draw it: the digital “haves” and “have nots”, permanent connectivity, and the mirror world.

“The first future will be defined by the digital “haves” and “have nots”. In this future, online and offline inequality will continue being captured in identity. Take, for example, Afghanistan, which currently has the largest gender gap in ID coverage, with 94 percent of men owning IDs, compared to only 48 percent of women. Ironically, in a world of hypervisibility, invisibility is still a problem for millions. The World Bank reports that globally close to 40 percent of the population aged over 15 in low-income countries do not have an ID. If you are not counted, you do not count. The recent refugee crisis in Europe has further brought home just how important portable, verifiable identity has become in a globalised world. [...] The World Economic Forum advises that if we act wisely today, digital identities can help transform the future for billions of individuals, all over the world, enabling access to new economic, political and social opportunities, while enjoying digital safety, privacy and other human rights. This is the choice between a future that is either more, or less, equal.”

In 2026, the gap has not closed. It has bifurcated: the invisible remain invisible, while the visible are more legible than ever.

“The second possible future is one of permanent connectivity. In a world with permanent connectivity, what happens if you do not want to share information? As a British man who told facial recognition trial administrators to leave him alone (“piss off”) found out first hand, insisting on complete privacy would mean a life spent completely offline, cut off from all communication (messaging), information (online content), outside space (cameras), and payment functionalities (digitally logged). Even encryption alone would not suffice, as quantum computing will make it possible to break most encryption retroactively. If you decided you wanted to go down this route, you would have to become a hermit, opting out of all interaction with the rest of the world. In this future, we will never “turn off”. [...] In this future, there will be no part of human life, no layer of experience, that is not going to be used for economic value. Ultimately, this will redefine what it means to be human.”

The hermit scenario turned out to be optimistic. Opting out now means opting out of employment, healthcare, and civic participation, not just communication.

“The third future we will consider is called the “mirror world”, a complete representation of the world in digital form. Every person, action, tree, building, wind gust, sun ray, check-out counter and loaf of bread will be captured as a digital twin."

You would be forgiven for thinking the mirror world is the most far-fetched. In reality, all you need is a camera.

“Some aspects of the mirror world are pretty incredible. The ability to search spaces (images), hyperlinking objects into networks of the physical world, just as the web hyperlinked words. If things and places become machine readable, this opens up a whole new family of algorithms. In this 4D world, it will be possible to scroll forwards and backwards in time. To interact in the mirror world, the centre of interaction will move from the smartphone keyboard to the camera. Yet if we arrive in the mirror world and the business model on offer is still attention, exploitation will reach entirely new heights.”

Transhumanists, individuals fusing themselves with technology, take the mirror world seriously.

“By inserting chip implants in their body, for example, the individual quite literally becomes the sensor, absorbing its capabilities. This will range from the benign, such as swiping your arm to check in at work in the morning, to acquiring additional processing power and memory. Likewise, this will make deeper relations between humans and machines possible, like enhancing technology with human characteristics, uploading information into bots and robots, and, at later stages, brain-to-computer interfaces.”

“Musk’s long-term rationale means hedging against AI wiping the human race out by creating symbiosis between humans and machines.”

This is one of my favourite anecdotes on technological utopianism’s long history of promising connection and delivering something else.

"Back in 1858, when the transatlantic telegraph cable was completed, some heralded world peace. There were fireworks on the day of the inauguration. More connectedness would lead to better understanding of each other. “

It always makes me laugh. Not because it is true. But because it is so, so wrong.

The assumption that more connection produces more understanding has been wrong before. It is wrong again now. The difference is that this time, the infrastructure of connection is also the infrastructure of control. Full totalitarianism is not required to create a self-fulfilling prophecy of deterministic self-correction.

“What few people realise is that George Orwell's famous novel is actually widely misunderstood. The true warning was not a society where you are watched at all times, but one where you could be. The main character narrates: “You had to live – did live, from habit that became instinct – in the assumption that every sound you made was overheard, and, except in darkness, every moment scrutinised.”

Orwell's warning describes authoritarian capture. Huxley's Brave New World describes the liberal version: drowning in information, indifferent to facts. “Just as competition between liberal democratic, fascist and communist systems defined the 20th century,” I wrote, “identity treatment will define the 21st.” Authoritarian systems that subordinate the individual to the state and democratic systems that derive legitimacy from individual rights. In each, identity treatment shapes the everyday person’s political voice.

“Democracy allows us to institutionalise our voices, creating a world in our own image. But it stops working when we lose our voice. Identity is a fundamental asset of interaction: it is the very first step towards humanity in our data-driven society.”

Identity Reboot was written to make three arguments. One, privacy erosion erodes human autonomy (independence to decide), and shrinking autonomy and increased AI agency shrinks human agency (power to do). Two, identity is the foundation of rights, and the prerequisite for AI accountability. Three, identity treatment determines political systems.

If we cannot make up our mind, or change our mind, democracy is already dead. But the capacity to ask that question, and to insist on an answer, is itself an act of constitutive autonomy.

The allegory of the cave, or Plato's cave, is a dialogue the Greek philosopher Plato attributed to Socrates, describing a group of people chained in a cave, watching the shadows of the outside world on the blank wall in front of them. To the prisoners, the shadow world is reality. LLMs are trained in Plato's cave, and do not leave. AI world models first simulate the world, and then enter the physical world to test this simulation. Plato's prisoners cannot conceive of a world beyond the shadows, and Socrates believed that these prisoners would protect themselves against – and kill anyone – who tried to drag them out of the cave. Let humans not be defined by what AI cannot yet do, but by what only humans choose to become.

Caspar David Friedrich, Wanderer above the Sea of Fog (1818)